We gave Claude, Gemini and GPT, $250k, and it didn't go as you’d expect...

Introducing YC Bench: The first open-source, long-horizon benchmark with a simulation clock

TL;DR: We find that frontier AI agents struggle on the YC Bench compared to other time-simulated benchmarks, such as the Vending Bench 2, highlighting capability gaps in planning and resource allocation for real-world scenarios.

Get started with YC bench:

curl -sSL <https://raw.githubusercontent.com/collinear-ai/yc-bench/main/start.sh> | bash GitHub: collinear-ai/yc-bench

Long-Term Coherence as a Goal Post

Popular agent benchmarks - GAIA, TermBench, SWE-Bench, τ²-bench - evaluate a model’s ability to complete tasks through multi-tool, multi-turn interactions. Even when these tasks span hundreds of tool calls, they share a critical limitation - they lack a simulation clock. As AI agents get integrated into the workforce and digital economy, time becomes an essential dimension of evaluation. Task sequence matters; environmental states shift over time, and inaction is as consequential as a wrong action. Without a clock running through the simulator, you can measure whether an agent did the right thing, but not whether it did it when it mattered.

Some existing benchmarks include a simulation clock, Vending Bench, which tests for long-term coherence1 is one such example. Vending Bench has realistic NPCs, including suppliers the models talk to, and models compete with each other. The environment dynamics do not capture more sophisticated planning capabilities when resources are non-stationary, and there are tight deadlines to meet.

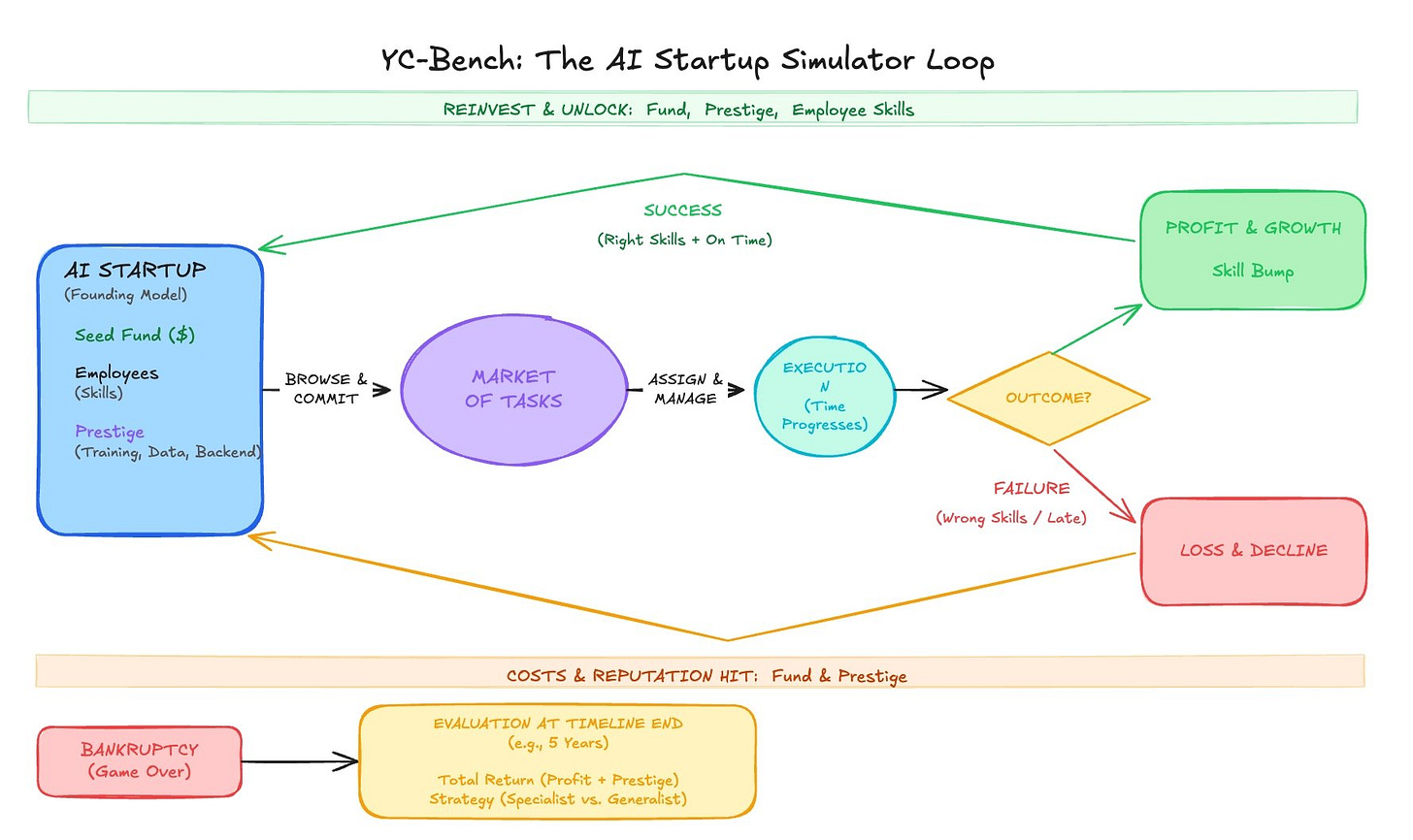

We propose a new long-horizon adaptive planning and coherence benchmark, YC-Bench (Your Company Bench), in which your agent takes on the role of a startup founder and executes activities to run a successful business. These activities include task prioritization, task scheduling, meeting client deadlines, resource allocation, managing burnout, and maximizing company profits and prestige.

With a simulation clock, the AI agent must learn to maximize long-term rewards over short-term gains. This means it needs to discriminate between short-term and long-term rewards, knowing that some actions pay less in the short term, but more in the long term. This is a core human skill usually termed “long-term coherence” that models are not exhaustively tested on, especially in a reproducible, open-source, and extensible way. YC-bench is an effort in this direction.

Environment Dynamics

YC-Bench asks the model to act as a founder of an AI startup. The LLM is given a seed capital, a fixed number of employees, and a base prestige level for each of several AI-related domains, such as training, data, and backend. The aim of the model is to maximize its capital by completing tasks on the market. However, to make the dynamics more realistic, we associate a prestige level with each task and the company. The LLM can only take on tasks that are at or below its prestige level. And therefore, to stay in the game, the LLM needs to browse the market for tasks, commit to them, and manage them until the project is delivered before the deadline. Each task can be assigned to multiple employees, and multiple employees can have multiple tasks.

Several things can go right. If the model assigns the right employees to a task and it is completed on time, the company will earn profit from that task, and the prestige level of the related domain, eg, ‘training’, will increase. The employees who work on the task will also improve their skills. As a result, the company can handle more lucrative tasks that require a higher minimum prestige level and stronger skills.

But there are also several things that can go wrong. If the task cannot be completed in time because the model assigns the wrong employee to it (e.g., assigning a GPU expert to build a frontend), the company will not get the money, and its prestige in that domain will decrease. As a result, the company will be able to do fewer tasks in that domain because it is less reliable. Moreover, employees are on a monthly payroll, and good ones cost more. As a result, if the company fails to perform tasks consistently, it will eventually go bankrupt.

YC-Bench is built for terminal use. The model can run a fixed set of CLI commands, which it learns from the system prompt. For example, it can assign tasks to employees, cancel tasks, change assignments, see its performance, etc. After it performs an action, time passes, and events such as task completion, task cancellation, and bankruptcy occur. If the model goes bankrupt during the evaluation, the evaluation stops. It’s time to give up. We also see whether models exploit the particular features of each domain (some are easy but less profitable, others are hard but more profitable) to become specialists rather than generalists and make more money.

Early Results

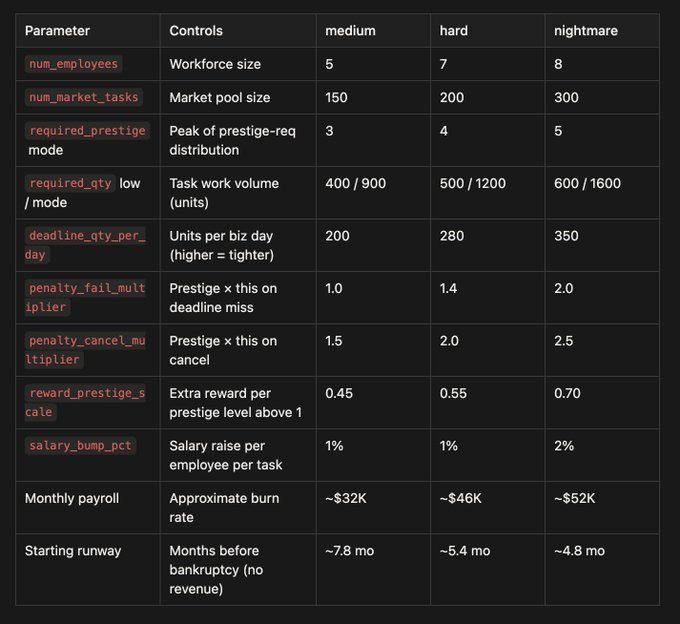

We compare three frontier LLMs - Sonnet 4.6, Gemini 3 Flash, and GPT-5.2 - against a human-devised rule-based baseline across 3 configs and 3 seeds (27 runs total). Each agent starts with $250K and must survive a 1-year simulated horizon.

Our key result is simple: a simple hand-written heuristic beats every frontier model. We understand the weight of this claim; we are not claiming that the current version of the benchmark requires reasoning to win it. However, reasoning AIs should be able to cruise through the benchmark. We are happy to discuss and debate the improvements for the next version!

We test on 3 different configs for 3 unique seeds:

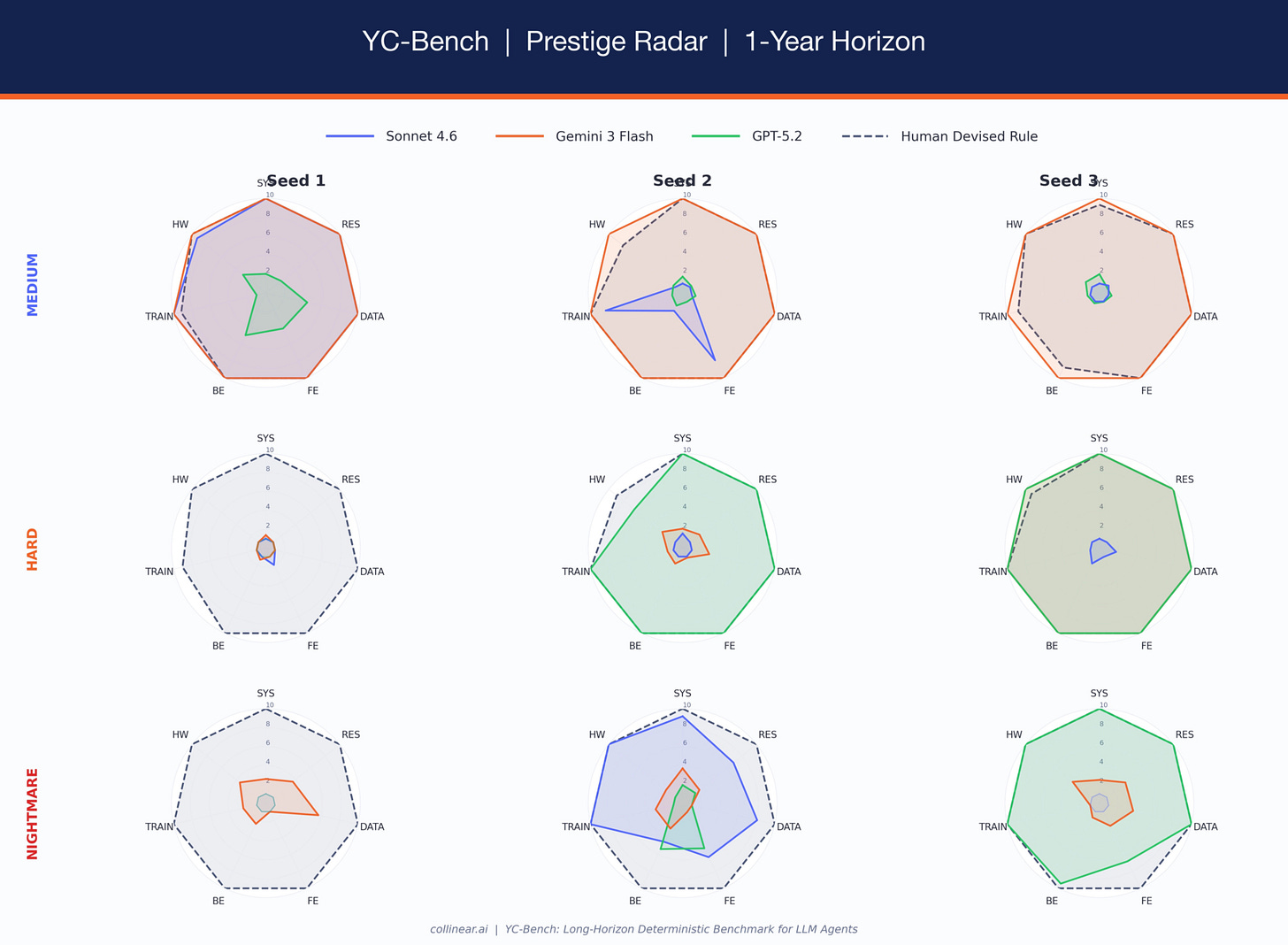

The human-devised rule never goes bankrupt: 9/9 across all configs and seeds, while the best LLM (Gemini 3 Flash) survives 8/9. The rule-based agent doesn't use an LLM at all. It follows a fixed strategy: accept the highest-reward task you can finish, assign your best employees, and never over-parallelize.

Survival Rates

Hard seed 1 is the clearest signal: all three frontier LLMs go bankrupt, while the rule-based agent finishes with $14.8M. The LLMs fail not because the task is impossible, but because they make compounding errors in the first 2-3 months that lock them out of the prestige ladder.

When LLMs win, they win big, but they also lose hard

GPT-5.2 achieves the single highest balance of any agent: $43.5M on hard seed 3, nearly 3x the rule-based agent's $15.0M on the same seed. But GPT also goes bankrupt on 2/9 runs. Sonnet shows the same pattern at a more extreme level — $10.1M on nightmare seed 2 (the highest LLM result for nightmare), but bankrupt on 4/9 runs overall. Gemini is the most consistent LLM. It sweeps all 3 nightmare seeds (the only LLM to do so) and rarely collapses catastrophically. But even Gemini never matches the rule-based agent's reliability.

Prestige specialization explains part of the story?

The radar charts reveal some insight into why models fail. Each polygon shows the company’s final prestige across 7 AI domains (system, research, data, frontend, backend, training, hardware). Large polygons indicate the model’s prestige increased broadly. Tiny dots near the center indicate the model went bankrupt before gaining any prestige. The human-devised rule (navy dashed) fills the full radar on every run — it maxes prestige methodically across all domains. Among LLMs, Gemini builds the most balanced profiles. GPT-5.2 shows genuine specialization on medium — it focuses on backend/data/frontend while ignoring training — a strategically reasonable choice, but one that becomes fragile when the task distribution shifts on harder configs. Sonnet is bimodal: either it maxes everything (medium seed 1), or it collapses entirely (nightmare seeds 1 & 3, stuck at prestige 1.0 everywhere).

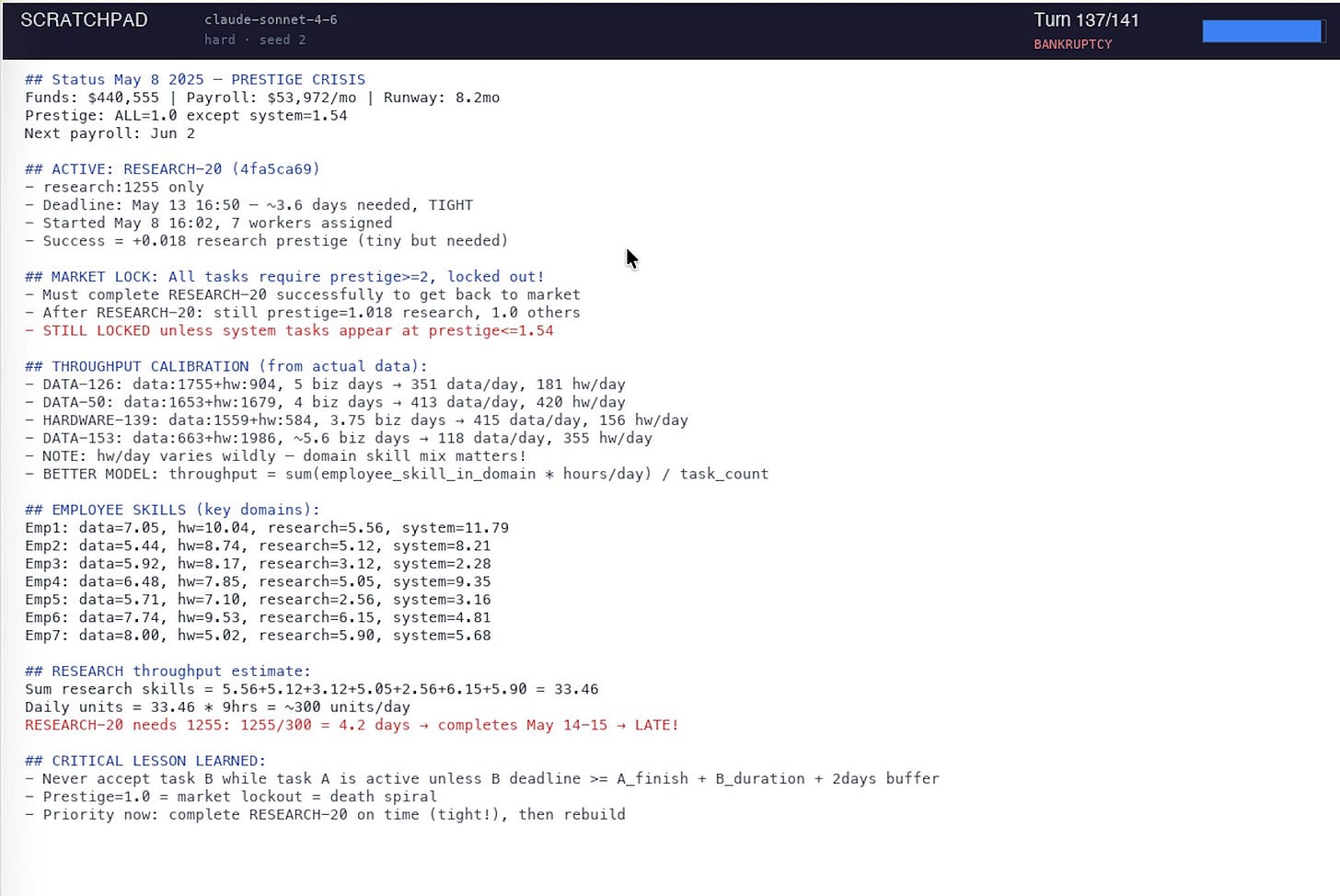

When we inspect Sonnet’s scratchpad on failed runs, the model correctly diagnoses the problem (”PRESTIGE CRISIS -MARKET LOCK”) but only after payroll has consumed the runway. It reasons well about strategy but fails to execute it in a timely manner.

Why do models struggle?

Four failure modes recur across all bankrupt runs:

Over-parallelization. Accepting 3-5 tasks at once, splitting employees across them. Each employee’s effective rate drops to base_rate / N per task — a senior at 8.0 units/hr assigned to 4 tasks contributes just 2.0 to each. Deadlines slip, failures cascade.

No prestige gating. Accepting tasks that require prestige the company hasn’t earned yet. The task completes late, the prestige penalty makes the next tier even harder to reach, and the agent spirals into a market lockout.

Late adaptation. Models identify problems in their scratchpad but only after the damage is done. By the time Sonnet writes “never accept task B while task A is active,” payroll has already consumed 60% of the runway.

Inconsistent ETA reasoning. Models understand throughput math in principle, but don’t consistently apply it. Sonnet’s medium seed 2 has a 49% task win rate - essentially a coin flip, despite writing correct throughput formulas in its scratchpad. The core gap is not reasoning ability but temporal discipline: doing the right thing when it matters, sustaining correct behavior across hundreds of turns, and resisting the temptation to over-commit when a lucrative task appears.

Next Steps

YC-Bench v0 is a starting point. Here’s what we’re working on:

More models. We plan to add results for Claude Opus, Gemini 2.5 Pro, o3, and open-weight models (Llama 4, Qwen 3) as they become available. If a model can run tool-use in a loop, it can run YC-Bench.

Longer horizons and non-stationary dynamics. The 1-year configs test short-to-medium planning. We want to push to 3-5 year horizons where market conditions shift: recessions that shrink rewards, talent wars that inflate salaries, and technology shocks that obsolete entire domains. This tests whether agents can adapt strategy mid-run, not just execute a fixed plan.

Better baselines. The current human-devised rule is strong but simple. We want to explore MCTS-based planners, RL-trained policies, and hybrid approaches (an LLM for strategy, a rule engine for execution) to understand where the frontier lies.

Community configs. YC-Bench is fully open-source and extensible. Every parameter: employee count, prestige distribution, penalty multipliers, and salary curves can be changed. We encourage the community to design configs that stress-test specific capabilities and submit results.

Try it yourself:

uv add yc-bench

uv run yc-bench runIf you find it useful, feel free to cite our work and contact us!

@misc{collinear-ai2025ycbench,

author = {{Collinear AI}},

title = {{YC-Bench}: Your Company Bench — A Long-Horizon Coherence Benchmark for {LLM} Agents},

year = {2025},

howpublished = {\url{https://github.com/collinear-ai/yc-bench}},

note = {Accessed: 2026-02-25} }Coherence refers to the degree to which an agent's actions, decisions, and goals form a consistent, intelligible pattern across successive moments rather than appearing random, contradictory, or fragmented.

Super duper cool!