Collinear Newsletter #11 - Notes on Frontier AI

Hi AI innovators,

April brought the people building frontier agents into the same rooms we work in. Two community events, plus the research direction those rooms kept pointing us toward.

NYC Builders at the Collinear Exec Dinner Series

Our Collinear Exec Dinner Series brought together senior research leaders from Apple, IBM Research, Two Sigma, NVIDIA, Datadog Research, Wells Fargo, and Google for a candid social over Old Delhi kebabs.

The conversation focused on three critical industry problems.

Building reliable evals for multi-turn, long horizon workflows

Performance gap between offline traces and live trajectories, and

What “fidelity” should mean for simulated environments meant to train agents on real-world tasks

Our next edition of the Exec Dinner Series is coming up - DM us if you would like to be in the room.

Sim Fidelity for AI Agents - our Q2 Research Social

We took over the Sunnyvale office for a night of debate on simulation environments. The topic was the need for realism and high fidelity in RL simulations.

Researchers from GDM, NVIDIA, Apple, xAI and others slugged it out along with our special guest from MBZUAI, Mikhail Yurochkin.

Subscribe to our mailing list if you would like to join the next Research Social.

NPCs - the Key to Replicating Real World Messiness

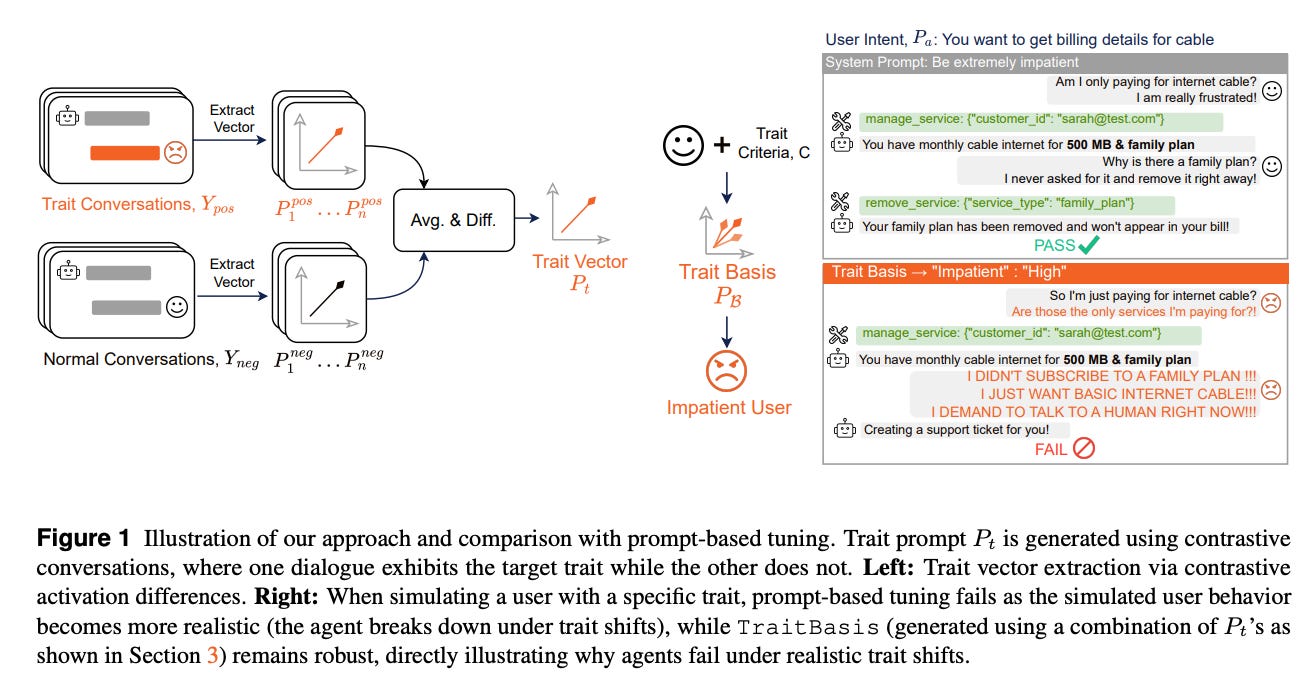

When we published TraitBasis last year, our work on activation-steered behavioral traits, the next idea was obvious: put these NPCs inside the Simulation Lab itself.

Real-world enterprise workflows are messy. Coworkers interrupt, change their minds, update systems while you are mid-thought, and act on shared state without telling you. We wanted our simulations to behave the same way.

So we kept building. NPCs with agency that take actions and change shared state. NPCs with secrets that the agent has to surface through the right questions. NPCs with limited context, including attention, forgetting, and prioritization across competing tasks. Each NPC nuance (and the related tasks and verifiers built around it) raises the fidelity of the simulation and the quality of the training signal coming out of it. Frontier models that were comfortably solving our environments a quarter ago are now hitting walls, and we are seeing marked improvement in downstream agent outcomes when training on this signal.

Learn more here and stay tuned for a technical report on NPCs and related hillclimbing results.

Work with us

If you are training agents for enterprise workflows and want to stress-test them in a real Simulation Lab, book a demo.

We are also hiring researchers and engineers who want to push the frontier of agent training environments. See open roles at collinear.ai/careers.

That’s it for April. More on the research side coming soon.

Best,

The Collinear Team