Collinear Newsletter #10 - Notes on Frontier AI

Happy March from the Collinear team.

It’s been a big quarter. We moved into a new office in Sunnyvale, launched YC Bench, extended the Simulation Lab, and hit the conference circuit. A lot to cover, so let’s get into it.

New home in Sunnyvale

We outgrew our old space and opened a new office in Sunnyvale. A big thanks to the customers, partners, and friends who stopped by during the first few weeks to check it out.

The sign is up, the whiteboards are full, and the espresso machine is already earning its keep. Good to have a home base.

YC Bench: can frontier models run a startup?

We released YC Bench, the first open-source, long-horizon benchmark with a simulation clock.

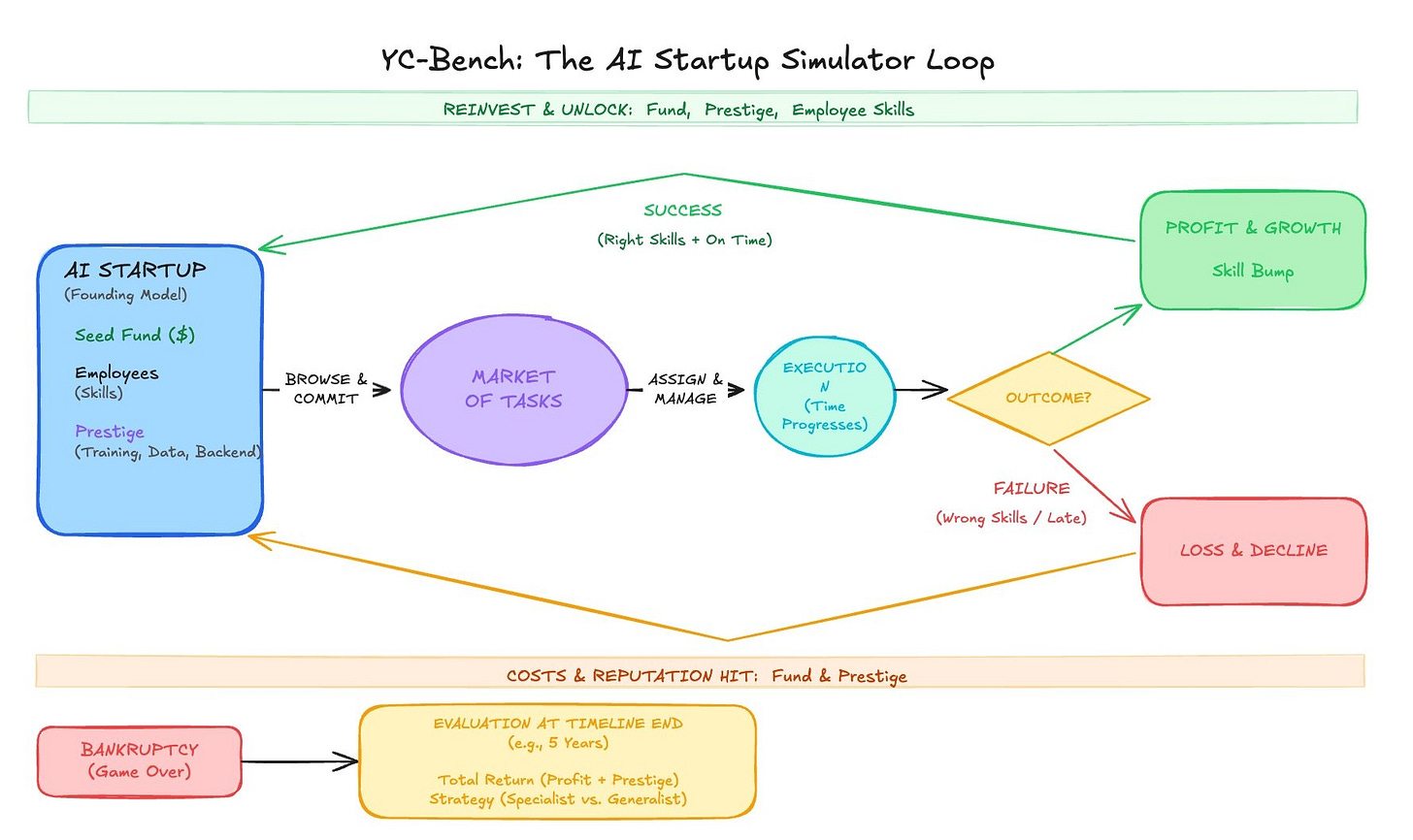

The idea: give a frontier model seed capital, a small team, and a market of tasks. Ask it to run an AI startup. Manage employees, hit deadlines, allocate resources, and maximize profit over time.

What we found is that a simple rule-based agent consistently outperforms frontier LLMs. Not because the task is impossible for them, but because they make compounding mistakes early on that they never recover from. They chase short-term wins, over-parallelize, and adapt too late when conditions change.

This matters because the industry is moving fast toward long-running, multi-step agent workflows. But reliability isn’t keeping pace with this ambition. YC Bench measures exactly that gap: not whether a model can answer a question, but whether an agent can hold a coherent strategy over time.

YC Bench is open-source and on our GitHub. We built it to be extensible. If you’re working on long-horizon agent evaluation, we’d love to hear what you find!

Simulation Lab: what it is and what’s new

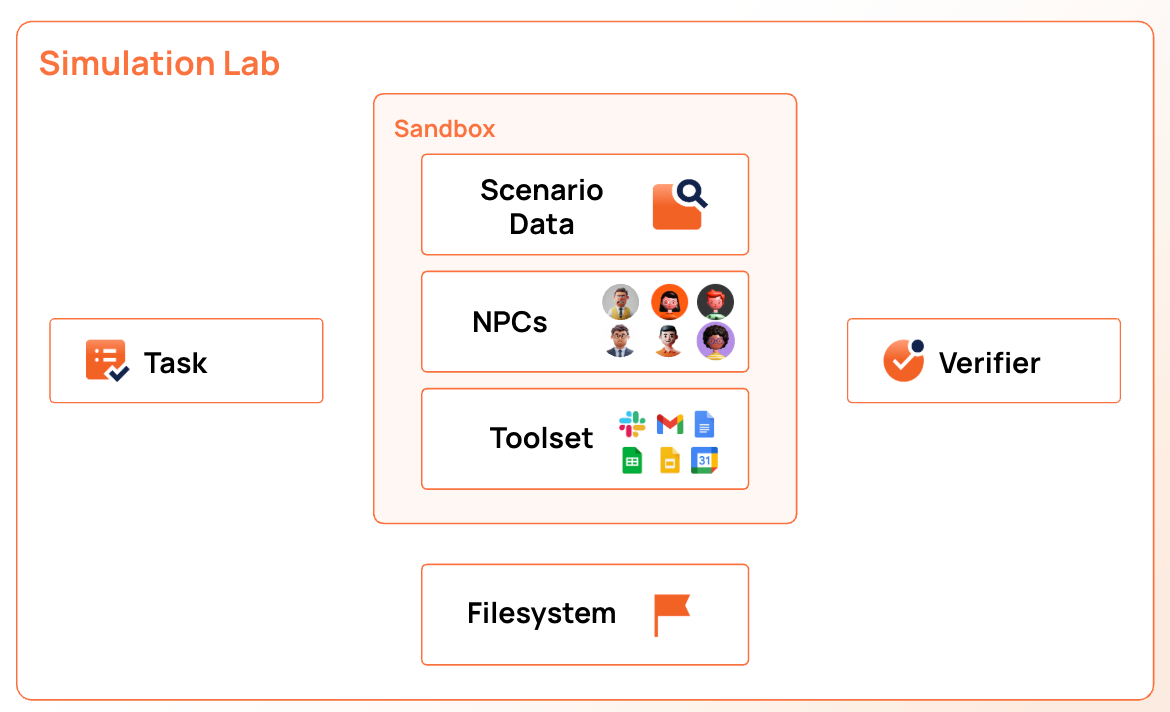

For those new here: the Collinear Simulation Lab is where AI agents learn enterprise work before going to production. Think of it as a practice environment, fully interactive, with simulated data, simulated users (NPCs), real enterprise tooling, and task-specific verifiers. Agents don’t just get tested. They get trained against realistic, messy, multi-step workflows.

Inside a sim lab, you get:

Simulated APIs for enterprise software (HR, Finance, Sales, Customer Service)

NPCs that push back, change their minds, and interrupt

Tasks with ambiguity, missing info, and competing priorities

Scorers that generate task-specific rubrics alongside formal verifiers

We’ve extended the lab this quarter with broader tool coverage and deeper scenario complexity. More enterprise surfaces, richer NPC behavior, and tighter integration with RL training loops. The goal stays the same: agents need a world to learn in, and we’re building that world.

If you’re building agents for enterprise workflows and want to stress-test them before they touch production, the Simulation Lab is open for business.

Together AI Conference

Nazneen spoke at the Together AI conference this quarter. Our partnership with Together continues to deepen. TraitBasis, our method for generating realistic simulated users, is now integrated into Together Evals. Builders on Together’s platform can simulate impatient, confused, or inconsistent user personas and see how their models actually hold up when conversations get unpredictable. If you missed the talk, stay tuned for a recap.

That’s it for March. More coming soon on the research side.

-- The Collinear Team