AI's U-235 Problem

Nuclear physics solved for k_eff. What's the AGI equivalent?

The race to AGI isn’t being won by whoever has the most compute or the cleverest architecture. It’s being won by whoever solves a quieter, less glamorous problem: finding enough of the right kind of data to cross the threshold. This is not a new concept.

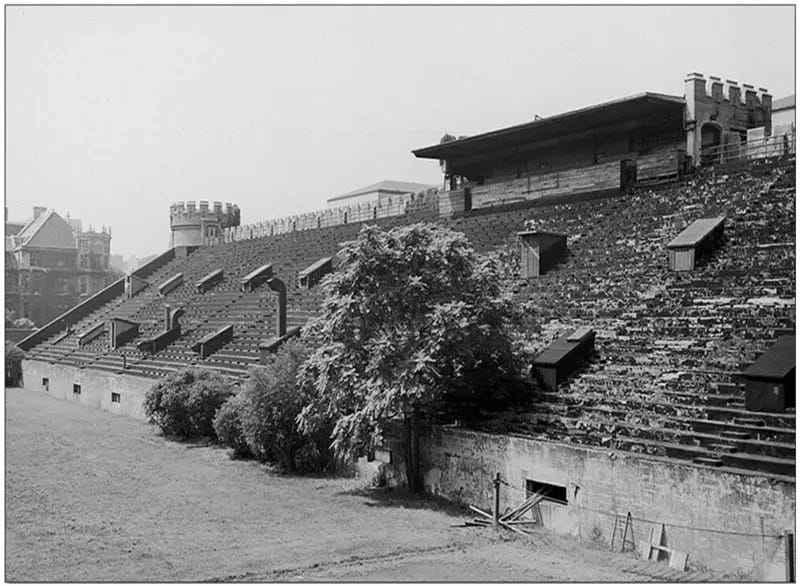

On December 2, 1942, Enrico Fermi and a small team of physicists gathered in a makeshift lab beneath the stands of Stagg Field in Chicago. They had spent months carefully stacking graphite blocks and uranium slugs into a precise arrangement they called Chicago Pile-1. At 3:25pm, they slowly withdrew a control rod (a neutron-absorbing insert that regulates the reaction). The Geiger counters clicked faster. The reaction sustained itself. The nuclear age began when the clicking didn’t stop.

What most people don’t realize is how close they came to never getting there. The core problem wasn’t theory. Leo Szilard had conceived of the nuclear chain reaction nearly a decade earlier, crossing a London street in 1933. He understood it so completely he quietly patented it and handed the rights to the British Admiralty to keep it out of dangerous hands. The physics was known and the threshold was understood. The problem they had was the fuel.

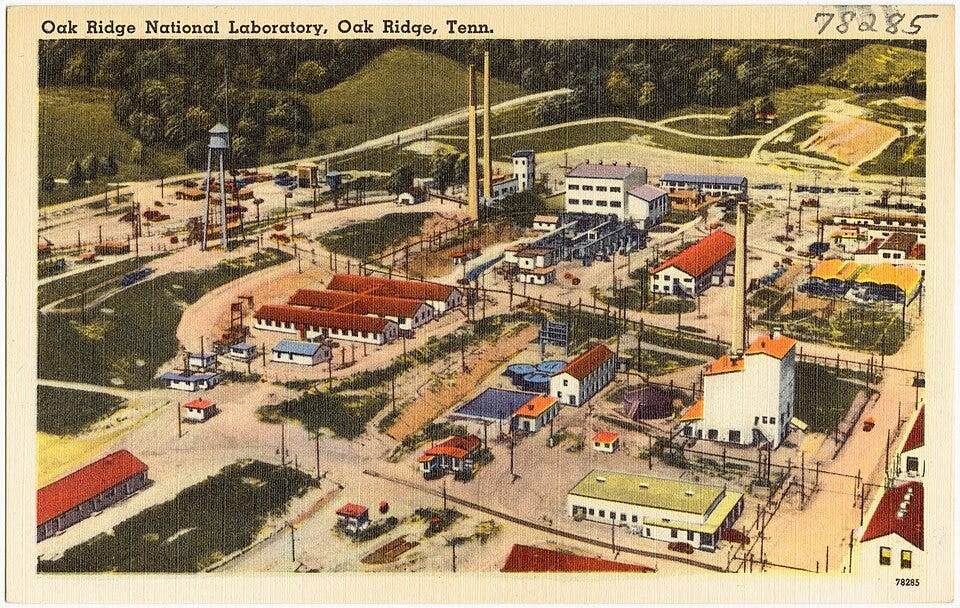

Natural uranium is everywhere. The earth’s crust is full of it. But raw uranium is almost useless for a chain reaction. The isotope that actually fissions, U-235, makes up less than 1% of natural ore. The rest is inert. Volume alone gets you nowhere. Too much of the wrong material actively works against you as it absorbs neutrons and dampens the reaction before it can sustain itself. The real breakthrough of the Manhattan Project wasn’t the bomb. It was Oak Ridge’s K-25 gaseous diffusion plant, built in 1944 to enrich uranium at industrial scale. At the time it was the largest building in the world. Its only job was to concentrate U-235: filtering, separating, amplifying the rare fissile material until there was enough to matter.

The Ore Gets Leaner

Today’s AI models are trained on more data than any human could read in a thousand lifetimes. The models are still running into walls, and the reason maps almost exactly onto Oak Ridge. The reason some data moves models forward and most doesn’t comes down to what a model can actually learn from it. Training works by exposing a model to examples and having it predict what comes next, then correcting it when it’s wrong. The correction is where the learning happens. Boilerplate content, templated writing, repetitive programmatic output: these produce almost no correction signal. The model already knows what comes next.

High-signal data is different. It contains genuine reasoning, unexpected connections, nuanced judgment, edge cases the model hasn’t encountered. Every one of those is a correction opportunity. When the model is wrong, it gets updated and gets sharper.

U-235 atoms fission when struck by a neutron because of specific properties in their nuclear structure. Most uranium atoms absorb the neutron and go quiet. The difference between fissile and inert material is structural, not superficial. High-signal training data works the same way. Generic data absorbs the training pass and goes quiet.

Most new data being generated is programmatic: logs, auto-generated content, boilerplate, synthetic outputs from models that are already mediocre. It’s abundant, cheap, and mostly inert. As it floods in, it dilutes the fraction of genuinely useful signal further. As AI-generated content spreads across the internet, models trained on it learn the average, not the edge. The ore gets leaner the more we mine it.

Marie Curie didn’t find radium by sifting through more rock. She developed new processes to isolate and concentrate what she was looking for. The Manhattan Project built industrial infrastructure specifically designed to separate what mattered from what didn’t. The AI field needs the same shift.

Building Oak Ridge

Two things have to happen in parallel. The first is better curation pipelines: new methods to identify and extract high-signal data from existing sources, smarter filtering, better labeling, clearer definitions of what “exceptional” looks like for each capability domain. The second is synthetic data. Even though the risk of model collapse (the equivalent of contaminating your fuel) is real, waiting for enough naturally occurring high-signal data won’t get us where we’re trying to go. Not all synthetic data techniques are the same, and the differences between them matter a lot. Deliberately designed training data built to fill specific capability gaps is unavoidable.

The most straightforward approach is prompt-based generation: feed a capable model a topic, a domain, or a problem type and ask it to generate training examples at scale. Used carefully, this fills gaps in rare or underrepresented domains. Used carelessly, it produces plausible-sounding noise that makes the training pool worse, not better.

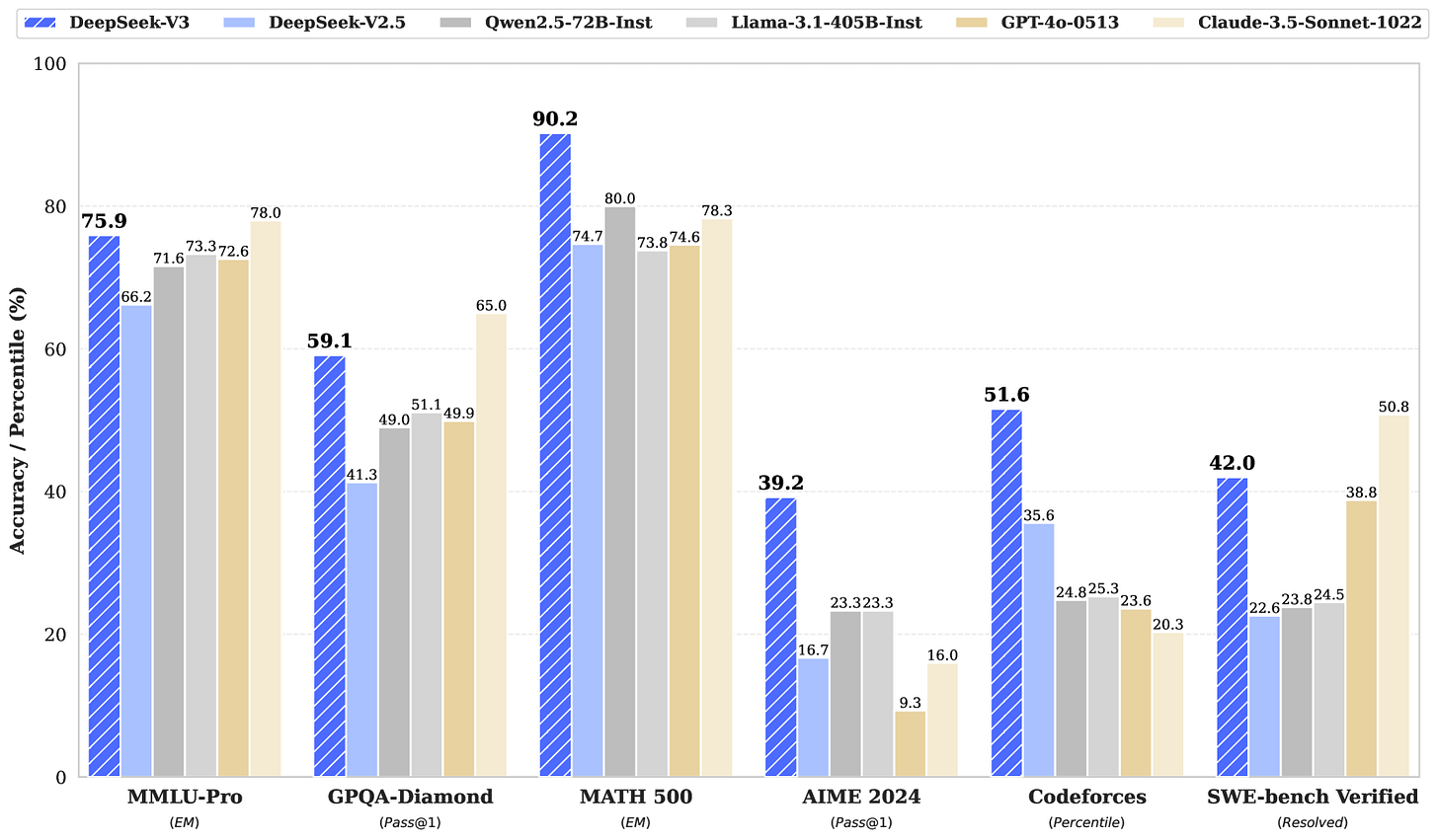

A more sophisticated method is web rewriting: take real content and use a stronger model to transform it into a higher-signal format. DeepSeek did this systematically building DeepSeek-V3. Rather than training on web content directly, they used a pipeline of stronger models to filter, rewrite, and structure data into higher-quality reasoning examples across mathematics, code, and general knowledge. The resulting model matched or outperformed models trained at several times the compute cost, with minimal changes to the underlying architecture. This is an example of using better fuel rods in the same reactor.

A third approach is reinforcement learning from human feedback (RLHF): instead of generating new data from scratch, use human preference signals to identify which model outputs were high quality and train on those. This turns the model’s own outputs into enriched fuel, but only when paired with careful human judgment about what “better” means. Recent work has pushed this further, with new techniques achieving alignment quality comparable to full human annotation using only 6-7% ( 6 7!!! ) of the annotation effort, by targeting human review at the samples that are hardest to label automatically.

The most surprising recent result is pure reinforcement learning with no labeled data at all. DeepSeek’s R1 demonstrated that reasoning capabilities can emerge through pure reinforcement learning, with no human-labeled reasoning trajectories required. The model was rewarded for getting verifiable answers right (math problems, code that actually runs) and developed self-reflection and strategy as emergent behavior. It’s the closest thing yet to a model generating its own fissile material.

At Collinear AI we realize the theory isn’t the bottleneck. The hard part is making these techniques reliable at scale: turning prompt-based generation, web rewriting, and RLHF from one-off research sprints into something teams can run repeatedly without rebuilding from scratch each time. Most organizations treat each data effort as a custom project. SimLab is how Collinear makes this routine.

The contamination risk is real and worth understanding. Shumailov (and others) demonstrated that repeatedly training on synthetic data leads to model collapse, a finding that attracted significant attention given how close current models are to exhausting available high-quality data. The mechanism: recursive training on synthetic outputs causes models to produce repetitive, narrowing results, effectively losing the tails of the original data distribution. The model gets so good at the average that it loses the edges.

The answer isn’t to avoid synthetic data. Research shows that keeping real data in the mix and layering synthetic data on top, rather than replacing real data entirely, avoids the degenerative feedback loop. The ratio and sequencing matter enormously. Synthetic data added to a real-data foundation behaves very differently from synthetic data trained on top of synthetic data. The centrifuge has to be calibrated, not just built.

Critical Mass

Before fission, energy was extractive. You burned coal, oil, or gas: feed the furnace, get energy out, repeat. Power was linear, bounded by what you could mine and move.

Fission changed that. A reaction releases neutrons that trigger more reactions. Above critical mass it’s self-sustaining: withdraw the control rod once and the chain continues without further input. A kilogram of enriched uranium and a kilogram of coal aren’t on the same spectrum. Critical mass is the threshold where the system stops depending on external input and begins feeding itself.

Every AI model today is extractive in the same way pre-fission energy was. We supply the data, the compute, the architecture decisions, the fine-tuning, the human feedback. The model improves because we keep feeding it. Remove the input, the improvement stops.

AGI is the point where that changes. A system past the AGI threshold can reason about its own limitations, identify what it needs to learn, generate or seek out the training signal it needs, and improve its own architecture. The chain reaction sustains itself.

The gap between today’s best models and that threshold is categorical, the same way fission and combustion aren’t variations of the same phenomenon. Current models are extraordinarily capable combustion engines. Once a system crosses that line, the rate of improvement stops being limited by what we supply. It becomes limited by the physics of the system itself. Human researchers won’t be the primary driver anymore. The reaction moves at its own pace, on its own terms. Getting the enrichment right before we get there is the only real leverage point we have.

Two Races to the Same Threshold

The world is currently running two parallel races to the same kind of threshold, and almost nobody talks about them together.

The first race is to fusion. Thirty-five nations are collaborating on ITER, the international fusion project in southern France. It’s been delayed repeatedly and now targets 2039. Private companies are moving faster, but even the boldest credible estimates put commercial fusion in the early 2030s at best. The physics is understood. The engineering is the hard part.

The second race is to AGI. Both have the same core structure: a threshold that is theoretically understood, a fuel problem that is practically unsolved, and a lot of engineering standing between the two. And the two races may be more entangled than they first appear.

The race to build AGI may literally require the fusion race to progress first, or at least the fission one. The energy demands make that dependency increasingly hard to ignore.

AI data centers are already consuming power at a scale that strains the grid. In 2024, global data center electricity consumption hit around 415 terawatt-hours (about 1.5% of world electricity use), growing at a rate more than four times faster than overall global electricity consumption.  By some estimates, that figure could approach 945 TWh by 2030 (roughly equivalent to Japan’s entire annual electricity consumption) with high-growth scenarios pushing past 1,700 TWh by 2035.

That’s before AGI. A self-sustaining AI system iterating on itself continuously would require orders of magnitude more compute than today’s training runs. The chain reaction doesn’t just need enriched fuel. It needs an enormous, uninterrupted power supply to keep running once it starts. The physical energy problem and the cognitive threshold problem are coupled. Nuclear physics even has a name for it.

k_eff measures whether a chain reaction is self-sustaining. k_eff < 1 and the reaction fizzles. k_eff ≥ 1 and it runs on its own.

AGI doesn’t have an equivalent metric yet. But if it did, it might look something like this: does each generation of capability produce enough leverage to fund, power, and build the next one? We can call it a_eff. Right now, the honest answer is that we don’t know if a_eff ≥ 1, and most of the serious debates in AI (about scaling laws, compute returns, energy constraints) are really arguments about that number without naming it.

The Clock

Six years ago, the median expert estimate for AGI sat comfortably in the 2060-2070 range. As of early 2026, that number has collapsed to around 2033.  The compression is accelerating. Dario Amodei said at Davos earlier this year that AGI will likely arrive within a few years, possibly by 2027. Demis Hassabis of Google DeepMind put it more cautiously: roughly a 50% chance by 2030.

Fusion timelines haven’t moved the same way. ITER’s deuterium-tritium milestone is 2039. Commercial fusion power is likely a decade beyond that. The private sector is more aggressive, but even the boldest credible estimates put sustained commercial fusion in the early 2030s at best. Both thresholds represent the same kind of categorical shift: a self-sustaining reaction that permanently changes what’s possible. Fusion solves the physical energy problem and AGI solves the cognitive one, but both require getting the enrichment right before the reaction will hold.

The current trajectory suggests AGI crosses its threshold first, probably by a significant margin. That means the data enrichment problem is urgent in a way that plasma confinement simply isn’t. Fusion researchers have until the 2030s. The people working on data quality and synthetic enrichment for AI may have considerably less time, and far less certainty about when the window closes.

AGI isn’t blocked by a missing theoretical insight. Szilard had that moment crossing a London street in 1933. It isn’t blocked by compute or architecture alone. It’s blocked by the same thing that stood between Szilard’s patent and Fermi’s reaction: not enough of the right material, concentrated precisely enough, arranged carefully enough to sustain itself.

Fermi’s team stacked blocks for months and did the math until the geometry was right and the reaction kept going.

That’s what we’re building toward. Not a dramatic moment, but a controlled, deliberately constructed threshold where the system crosses over and begins to sustain its own improvement. We need an AI Oak Ridge.

That work is happening now, in pieces, across a lot of teams. If you're at a frontier lab or AI-native company working on model improvement (capability gaps, post-training data, pre-deployment testing), talk to one of our researchers.

Building the Centrifuge

Oak Ridge took two years and 24,000 workers to separate enough U-235 for the reactor to hold. The enrichment problem for AI is a similar order of undertaking, and no single team will solve it.

At Collinear, we’re building part of it. SimLab is our simulation lab for AI agents: the infrastructure to generate, curate, and verify high-signal data at scale. Simulated enterprise environments, NPC users, verifiable tasks, training-ready rollouts. It’s designed to make deliberate enrichment a routine capability for the teams that need it most.

If you’re training reasoning models, shipping agents into production, or watching half your team’s time disappear into eval data hygiene, we should talk.